How to measure the progress of your transformation project

- 20 June, 2018 15:03

A common challenge for organisations going through a technology transformation is how to measure progress and the impact of the new capabilities on business outcomes. In other words, how do you make measurement more data-driven rather than relying on anecdotes like, “yeah, we feel things are improving.”

A sensemaking mechanism is vital for leadership teams to understand whether the organisation is improving its software delivery performance. It also works as a catalyst to increase the investment capacity in the transformation program.

This article is intended to provoke thoughts around performance data science and how to nurture a data-driven culture. It’s not a definitive guide or a set of recommendations.

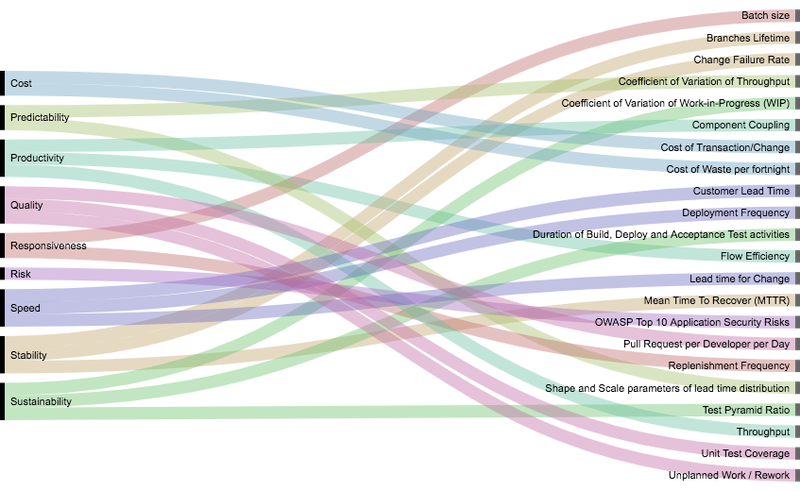

The picture below shows nine business dimensions: speed, stability, predictability, productivity, sustainability, quality, risk, responsiveness, and cost. These dimensions have a few metrics that can be used like building blocks to construct your transformation dashboard.

I’m now going to navigate across each of them and provide some actionable insights.

1. Speed

Customer lead time

This is the time between a customer order being accepted and delivered. A customer could be both internal and external and the order, for example, could be your feature or user stories. The lead time shows your ability to complete end-to-end work that matters to your customer. If you can measure only one thing, measure lead time.

Lead time for change

This shows how effective your change management process is, going from code commit to production. High performers do everything needed in less than one hour. That’s 440 times faster than their low performing peers. A cumbersome process to release changes (e.g. CAB meetings, later integration and manual testing) increases the cost of transaction, consequently increasing batch size, killing business responsiveness. This is lean product development 101.

Deployment frequency

This is how frequently you deploy software into production. High performers have decoupled deployment from release. They are deploying into production multiple times per day. Research shows they are deploying software 46 times more frequently than their low performing peers.

Although it’s a vanity metric, your deployment frequency is a leading indicator of how smooth your path to production is (small batches, mature CI/CD practices, effective change management, etc.).

2. Predictability

Coefficient of variation of throughput

A predictable software delivery team will have low variability in throughput and lead time. Delivering none and then a lot, and then none and then a lot is not good. This disruptive variability is harmful to both business and IT.

Shape and scale parameters of lead time distribution

Lead time distributions of knowledge work are exponential distributions, instead of normal distributions. To understand predictability of lead time, we use the Weibull Shape Parameter (healthy range between 1-2) and the Weibull Scale Parameter (healthy range between 0-10).

3. Risk

OWASP Top 10 application security risks

The Open Web Application Security Project (OWASP) is an open community dedicated to enabling trust in applications and APIs. Risks are rated by severity (technical impact) and likelihood. You probably should have an improvement driver to eradicate high-severe and critical exposures from your portfolio.

4. Sustainability

Coefficient of variation of work-in-progress (WIP)

The 8th Principle of the Agile Manifesto suggests that teams should be able to maintain a constant pace indefinitely. High WIP reduces quality and increases lead time and burnout. Teams need to find an optimum amount of WIP and maintain the flow. My empirical observation suggests that a healthy range for the WIP CV is between 30 per cent and 70 per cent.

Duration of build, deploy and acceptance test activities

The length of these activities is a leading indicator of your teams’ ability to maintain a sustainable pace. Define the target duration for each activity that makes sense for your unique context and create an improvement driver to meet these targets. Once met, determine a healthy range for these durations and let them operate without intervention within these ranges.

Test pyramid ratio

The shape of your test pyramid is another leading indicator of sustainability. Aim for a large base, typically 70 per cent of unit tests, providing fast and cheap feedback. Around 20 per cent of integration tests and 10 per cent of end-to-end tests on top of that, including the exploratory tests.

Page Break

5. Cost

Cost of transaction/change

There is a direct correlation between the cost of transaction and batch size. Cost of transaction in software delivery is composed of all steps required to release software (e.g. integration, manual activities, CAB meetings, etc.). I’ve learnt that visualising the cost of making a change (#people * #hours * hour rate) in dollars helps create a sense of urgency to automate and simplify change management activities, reducing the lead time for change. That's a major step for enabling small and frequent changes.

Cost of waste per fortnight

Visualising waste in dollars is a marvellous way to motivate continuous improvement. Waste could be recurring or one-offs. It could impact an individual, a team or an entire portfolio. It could be in the form of impediments, dependencies, downtime, time in queue or rework. In knowledge work, there will always be a waste. The question is, what is the healthy range?

6. Responsiveness

Replenishment frequency

Responsiveness is your ability to foresee and respond rapidly and safely to changing conditions, whether it is market sentiment, disruptive innovation, or political and regulatory changes. Keeping your options open and deferring commitment increases robustness, allowing you to make smarter commitments more frequently.

Batch size

Batch size is a primary leading indicator of responsiveness and has multiple facets. The batch size of each piece of work, the batch size of work-in-progress (WIP) and the batch size of each code change (measured by code churn in your code commit, pull request and code deployment).

Working in small batches increases the likelihood of going from start to finish without being interrupted, procrastinating or abandoning the work, making yourself available for the next most important small piece of work.

7. Stability

Change failure rate

A percentage of changes which result either in degraded service or subsequentially requires remediation. High performers are 5 times less likely to fail and operate in the range of 0 to 15 per cent.

Mean time to recover (MTTR)

MTTR shows how long it usually takes to restore service when an incident occurs. High performers recover from failure 96 times faster than their low performing peers, generally in less than one hour.

Branch lifetime

Long-lived branches are commonly leading indicators of instability. Identify which branch lifetime will help you move towards continuous delivery and make it an improvement driver.

8. Quality

Unplanned work/rework

The ratio of planned and unplanned work shows the quality of both engineering and management practices. An 80 to 20 per cent mark could be a good start, then 90 to 10 per cent, eventually reaching 95 to 5 per cent, depending on your context.

Unit test coverage

Comprehensive test automation is vital to enable small and frequent changes. Although it could be seen as a vanity metric, where more is always better, it is a leading indicator for reducing the lead time for change, batch size and amount of unplanned work.

80 per cent of coverage is a good target for an improvement driver. Teams doing a technique called test driven development are naturally reaching high code coverage.

Pull requests (PR) per developer per day

Code should be integrated earlier and often into trunk, and your code base should always be in a shippable state. That’s continuous integration 101.

Like code coverage, more is always better, and you should expect to see multiple PRs per day. That’s a leading indicator of batch size, branch lifetime, the lead time for change and cost of transaction. A healthy range should be around the 75 to 100 per cent mark.

9. Productivity

Throughput

That’s the ultimate evidence of productivity that shows how many pieces of work you can get done in a certain period (week, fortnight, month). There’s a correlation between WIP and lead time, explained by the Little’s Law in Queue Theory. By reducing WIP, you reduce lead time.

My empirical observation suggests that until a certain level, in Knowledge Work, reducing lead time increases throughput.

Flow efficiency

The ratio between value-added time and waiting time. In my experience, it is one of the strongest improvement drivers. It measures the efficiency that a piece of work navigates through the value stream, across different silos until it gets done.

Low flow efficiency means lots of wait time, thus waste. Teams not managing flow usually have a flow efficiency around 5 to 15 per cent, meaning every piece of work on average idles in queues for about 85 per cent of its time. A good target for knowledge work is around 40 to 50 per cent.

Component coupling

The level in which components depend on each other defines the extent to which teams or services can test and deploy their applications on demand, without requiring orchestration with other teams or services.

Tightly coupled architectures (aka monoliths) are brittle, hard to change, test and release. Loosely coupled architectures (aka microservices) are lean, with a single responsibility, without many dependencies, allowing teams to work, deploy, fail and scale independently, increasing productivity and business responsiveness.

Visualising component coupling helps you to make smarter decisions around software design and team structures. Efferent and afferent coupling metrics helps to visualise the component coupling.

I hope this has provided you with some actionable insights. Now grab your team, swarm around a whiteboard and draft a dashboard for your transformation. During the exercise, make sure to classify the type of your metrics, and set the thresholds, targets and healthy ranges. As you learn, come back and fine-tune them.

When setting targets for time-based metrics, try to think in distributions instead of averages. For instance, instead of aiming for an average lead time of seven days, aim for having 85 per cent of your work being completed within seven days.

This article focused on the WHAT. All data showed above are random with a sole purpose of illustrating the thought. If you’re interested in the HOW (sourcing, massaging and visualising the data), let me know and I’ll make it the next article in the series.

Marcio Sete is the head of technology services at consulting company Elabor8.